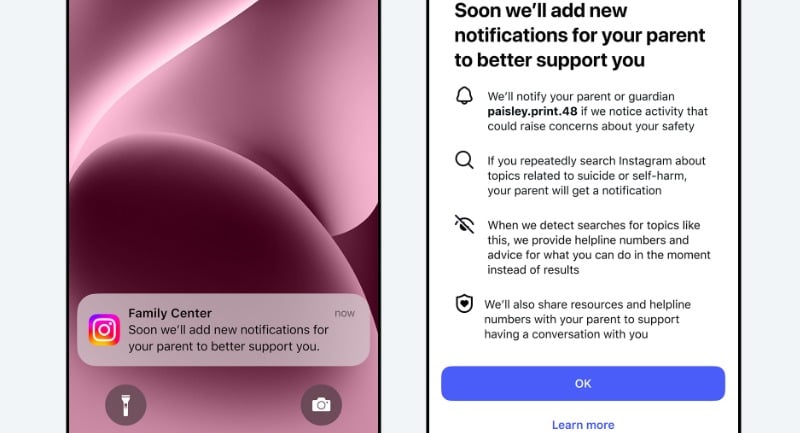

Instagram will start alerting parents if their teen repeatedly searches for terms linked to suicide or self-harm, as Meta expands its Teen Accounts protections.

The notifications will roll out “next week” for families using Instagram’s supervision tools in the US, UK, Australia and Canada, with other regions to follow later in 2026.

How the new parental alerts work

Meta says the alerts will trigger when a teen makes a “few searches within a short period of time” for terms associated with suicide or self-harm.

Parents and teens enrolled in supervision will also receive advance notice that the feature is coming, before alerts begin.

- Who gets alerts: Parents using Instagram’s supervision feature.

- What triggers an alert: Repeated attempted searches for terms such as “suicide” and “self-harm”, and phrases suggesting self-harm.

- How alerts are delivered: Email, text, WhatsApp and in-app notifications, depending on the contact information available.

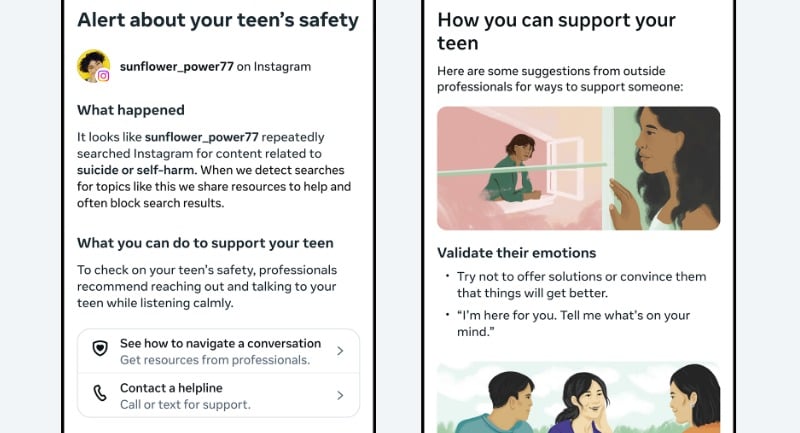

- What parents see: A full-screen message explaining repeated searches were attempted, plus expert resources to guide sensitive conversations.

Meta says its policy is to block search results for terms clearly associated with suicide and self-harm, and redirect users to support resources and helplines instead.

Balancing safety and over-notification

Meta says it wants to give parents enough information to step in, while avoiding unnecessary alerts that could reduce the feature’s usefulness over time.

Dr Sameer Hinduja, Co-Director of the Cyberbullying Research Center, said: “When a young person searches about suicide or self-harm, empowering a parent to step in can be extremely important. The fact that Meta has now built this in is a meaningful step forward and is the kind of change that child safety experts have been pushing for.”

Vicki Shotbolt, CEO of Parent Zone, added: “It’s vital that parents have the information they need to support their teens. This is a really important step that should help give parents greater peace of mind – if their teen is actively trying to look for this type of harmful content on Instagram, they’ll know about it.”

Meta said it analysed Instagram search behaviour and consulted experts from its Suicide and Self-Harm Advisory Group in setting the alert threshold. It will monitor feedback and adjust settings if needed.

AI parental alerts are also coming

Meta says it is building similar notifications for certain teen conversations with its AI tools, which would alert parents if a teen tries to discuss suicide or self-harm with the AI. The company says it will share more in the coming months.