While most people in Australia and New Zealand are optimistic about the benefits of AI (66%), 82% expressed concern that the content they consume online is vulnerable to being altered to fuel misinformation.

Adobe’s Future of Trust Study for Australia and New Zealand surveyed over 1,000 consumers in the two countries as part of a global study on encountering misinformation online and concerns about the impact of generative AI.

Misinformation online

Among the key findings of the study was that misinformation is one of the biggest threats society faces. The report found 96% of people said they consider the credibility of an online source before deciding to trust it or share it with others. 77% of ANZ consumers said it was becoming difficult to verify whether the content they were consuming online was trustworthy.

Misinformation was also found to be impacting how consumers use social media platforms. 32% of AUNZ respondents said they have stopped or limited their use of social media platform due to the amount of misinformation on it.

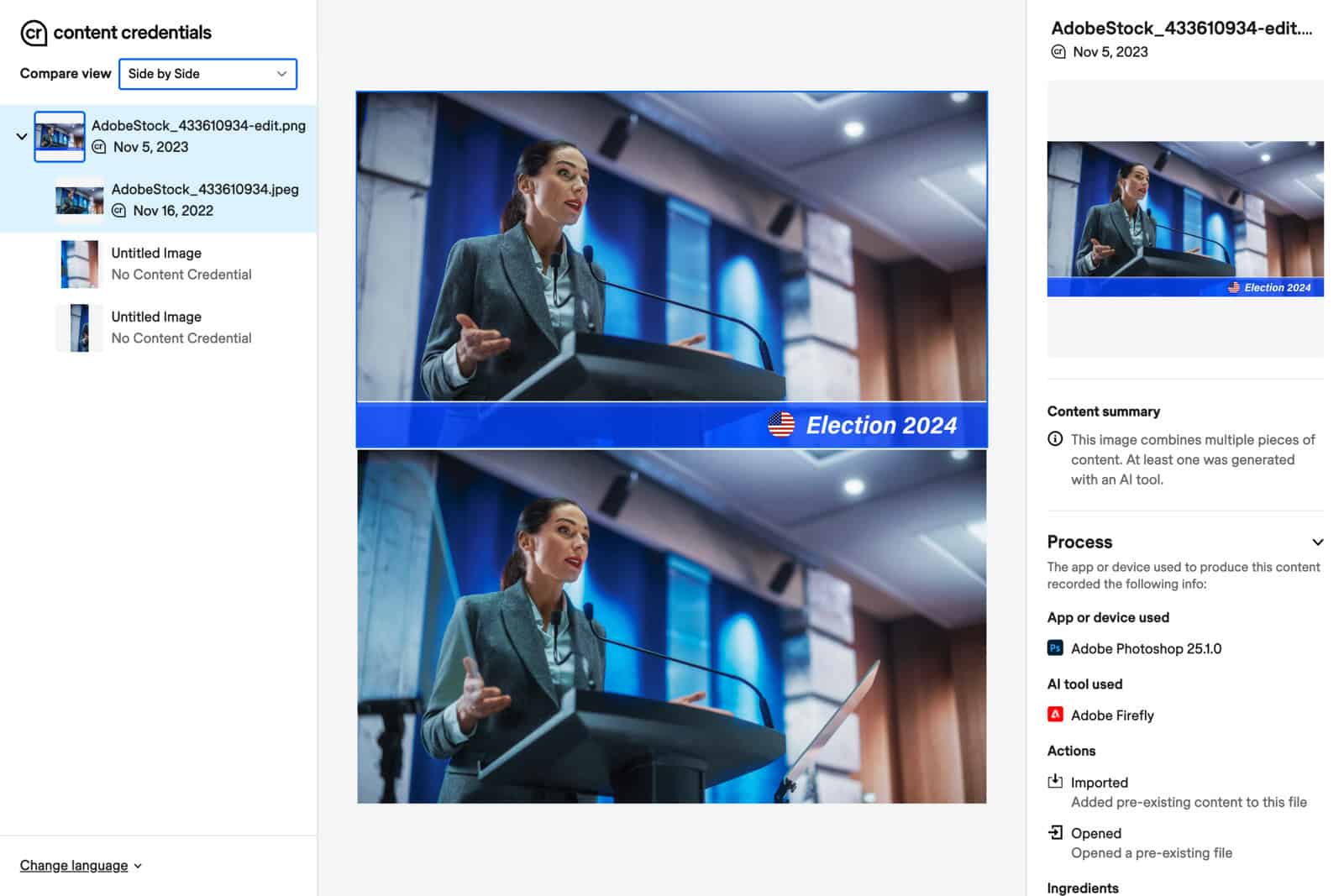

Protecting election integrity

Consumers surveyed also highlighted concern over protecting election integrity, particularly in a year when over 4 billion people globally are headed to the polls.

The study found 78% of people in AUNZ believe that misinformation and harmful deepfakes will impact future elections. It noted that 80% of people in AUNZ believe that election candidates should be prohibited from using generative AI in their promotional content.

Adobe’s study revealed that almost 9 out of 10 AUNZ consumers (87%) believe that governments and technology companies should work together to protect election integrity against the detrimental effects of deepfakes and misinformation.

Better tools and media literacy skills

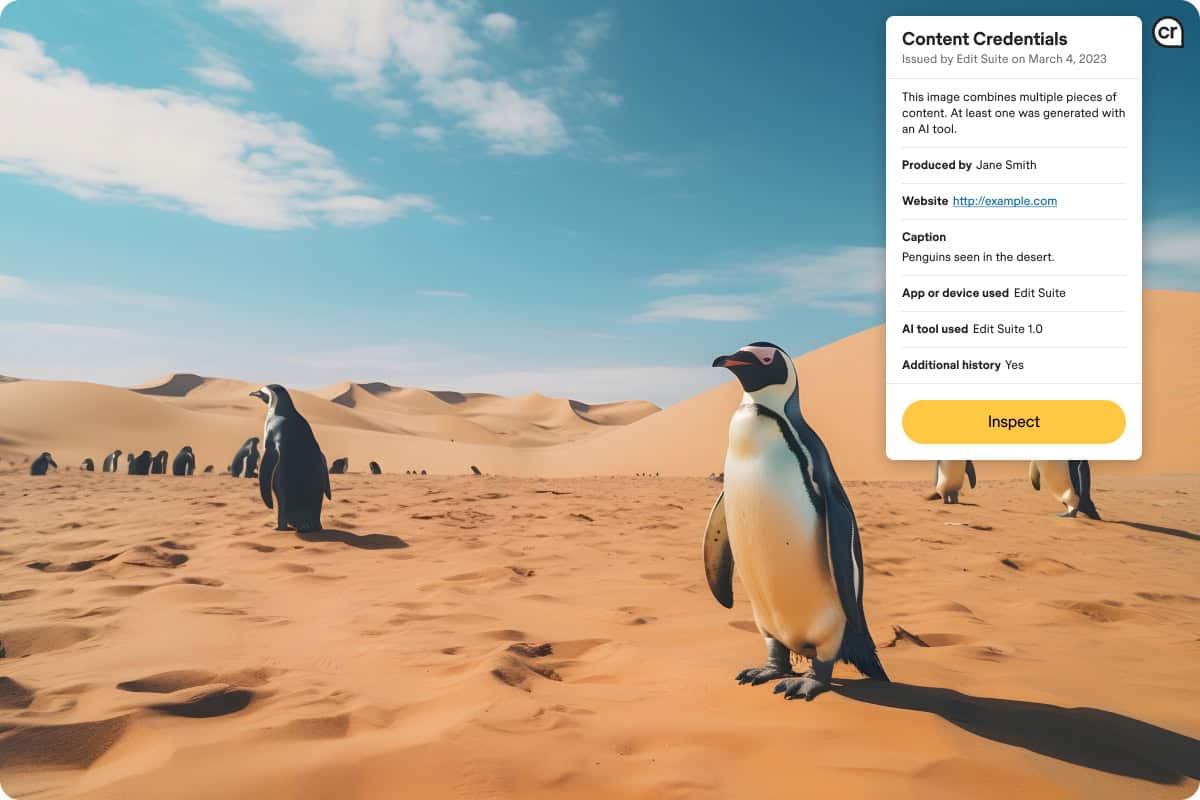

The report also highlighted a strong desire from consumers to have tools to verify the trustworthiness of digital content and the urgent need for proactive measures to address misinformation’s potential impact on election integrity globally.

According to the study, most people consider the credibility of a source before sharing content. 87% of AUNZ respondents said that having the tools and media literacy skills to verify whether a content is true is important, while 81% believe it is important to know if the content they are consuming is generated using AI.

Adobe also found that 87% of AUNZ consumers believe children should be taught media literacy skills as part of their school curriculum in light of misinformation and its potential effect on children.

Jennifer Mulveny

Jennifer Mulveny, Asia Pacific director of government relations at Adobe, said: “Our research shows that consumers are optimistic about the benefits of generative AI with usage set to accelerate over the the next year. As a leader in developing AI technologies responsibly, we deeply consider the impact it has on users, consumers and society at large.

“Our Future of Trust Study underscores the importance of building media literacy among consumers, where they are not only alert to harmful deepfakes but have the tools to discern fact from fiction.

“As the Australian federal election looms, adopting protective technologies like Content Credentials will be crucial to help restore trust in the digital content we are consuming,” she added said.

Through its work on Responsible Innovation, Adobe has been working on building media literacy to help educate consumers, improve and evolve provenance technology to track authenticity, it said, and embed new behaviours such as producers turning on content credentials and consumers checking and verifying content.

Adobe also has a “two-pronged” approach to responsible AI innovation with the AI Ethics framework and Content Authenticity Initiative (CAI), co-founded with the New York Times, to provide content credentials that provide a digital label of what technology was used throughout a piece of content’s journey including name, location, and edits made.

CAI partners include Microsoft, Publicis Groupe, Leica, NPR, Nikon, and Universal Music Group.